What Is AI, Machine Learning, and Deep Learning?

Three terms the internet loves to mix up, here’s what they actually mean, no jargon required.

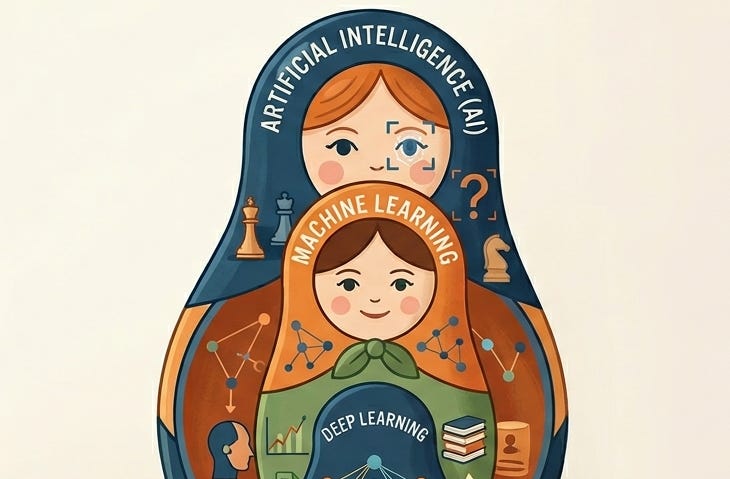

You’ve heard all three terms. You’ve probably used them interchangeably. But AI, machine learning, and deep learning are not the same thing, and understanding the difference is the first step to understanding why AI systems are inherently fragile, how their "learning" can be turned against them, and why they often behave in ways that defy human logic

Please note that this post is the first of our AI Security series, where we bridge the gap between high-level hype and technical reality. Before we dive into the specialized vulnerabilities of these systems, we must first talk about the basics.

By establishing a clear, jargon-free understanding of how these technologies differ and how they learn, we lay the groundwork for the more complex security and architectural topics to follow in this series.

AI Is the Big Tent

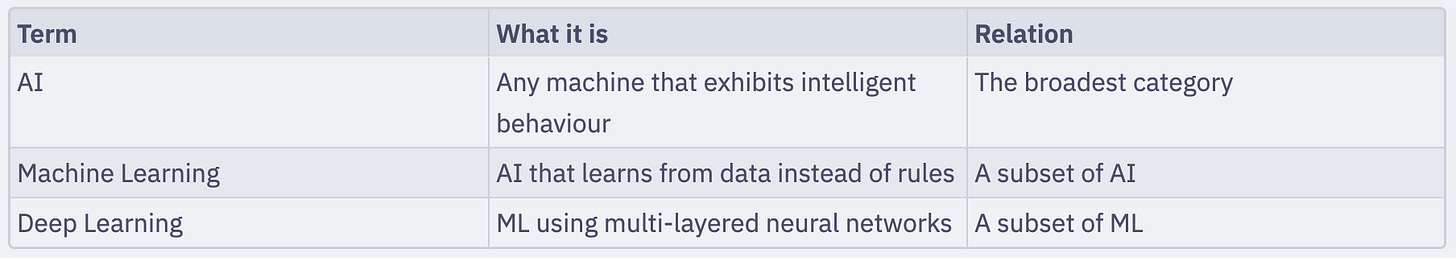

Artificial intelligence (AI) is the broadest term. It refers to any system that exhibits intelligent behavior — reasoning, problem-solving, learning, or decision-making — that we’d normally associate with humans.

That definition is deliberately wide. A rule-based system that plays chess using handwritten rules counts as AI. So does a neural network that generates images from text. They’re very different technologies, but both fall under the AI umbrella.

The key idea is that AI is the goal (machine intelligence), not a specific technique.

Machine Learning Is How Most Modern AI Actually Works

Machine learning (ML) is a subset of AI. Instead of writing explicit rules, you show the system thousands (or millions) of examples, and it figures out the patterns on its own.

Think of it this way. You could write rules to identify spam email: “if the subject contains ‘FREE MONEY’, mark as spam.” But attackers adapt. Rules break. Machine learning takes a different approach: show the system 10 million emails labeled “spam” or “not spam”, and it learns to recognize the patterns itself — including patterns you never thought to write a rule for.

The core principle: ML systems generalize. They learn from past examples and apply that learning to new, unseen data. That’s what makes them powerful. It’s also what makes them fragile in ways traditional software isn’t — a topic we’ll come back to throughout this series.

Deep Learning Is ML With Many Layers

Deep learning (DL) is a subset of machine learning. It uses artificial neural networks, loosely inspired by how neurons connect in the brain, with many layers stacked on top of each other. That’s the “deep” part.

Each layer learns to recognize increasingly abstract features. In an image recognition system:

Layer 1 might detect edges

Layer 5 might detect shapes

Layer 20 might detect “cat ears.”

Deep learning is why we can now build systems that recognize faces, transcribe speech, translate languages, and generate text with remarkable fluency. It powers virtually every AI product you interact with today — from spam filters to ChatGPT.

The hierarchy, in plain terms:

Why Compute Beat Cleverness

Here’s one of the most important, and counterintuitive, lessons from 70 years of AI research.

Researchers spent decades trying to build cleverer algorithms. Handcrafting rules, encoding human knowledge, designing elegant mathematical models. And they were consistently outperformed by one simple strategy: throw more data and more computing power at a simpler approach.

Richard Sutton, a pioneer in AI research, called this “the bitter lesson” in 2019: general methods that leverage computation are ultimately the most effective, by a large margin.

What this means in practice: modern AI progress is driven less by brilliant new algorithms and more by scale — bigger datasets, more powerful GPUs, more parameters. GPT-3, the model behind early ChatGPT, has 175 billion parameters. Its successor models are larger still.

This has a direct security implication. Scale means complexity, and complexity means more attack surface. A system with 175 billion parameters is not something any human can fully inspect or understand. That opacity is a security property — and not a good one.

What AI Is Actually Good At?

A quick litmus test from the training material helps here. AI tends to work well when:

The problem isn’t already solved by simpler means

You have enough good-quality training data

Some margin of error is acceptable

The patterns you’re learning from are relatively stable over time

It tends to fail — sometimes catastrophically — when:

The situation is genuinely novel (unlike anything in the training data)

100% accuracy is required

The underlying patterns change faster than the model can be retrained

The training data was biased, poisoned, or just plain wrong

That last bullet is where security gets interesting. The training data is a trust boundary. If an attacker can influence what a model learns from, they can influence what the model does — permanently, and invisibly. More on that in Series 4.

Conclusion

AI, ML, and deep learning are not interchangeable buzzwords. They’re a nested hierarchy of increasingly specific techniques, all built on the same core idea: learn patterns from data rather than encode rules by hand.

What makes this matter for security is exactly what makes it powerful: these systems learn behaviors that nobody explicitly programmed. That means the attack surface includes the data, the training process, the model file, and the inference pipeline — not just the application code sitting on top.

The rest of this series builds the foundation you need to understand all of that. Next up: how we got from “AI” being coined as a term in 1956 to ChatGPT in 2022 — and what the detours tell us about where the real risks live.