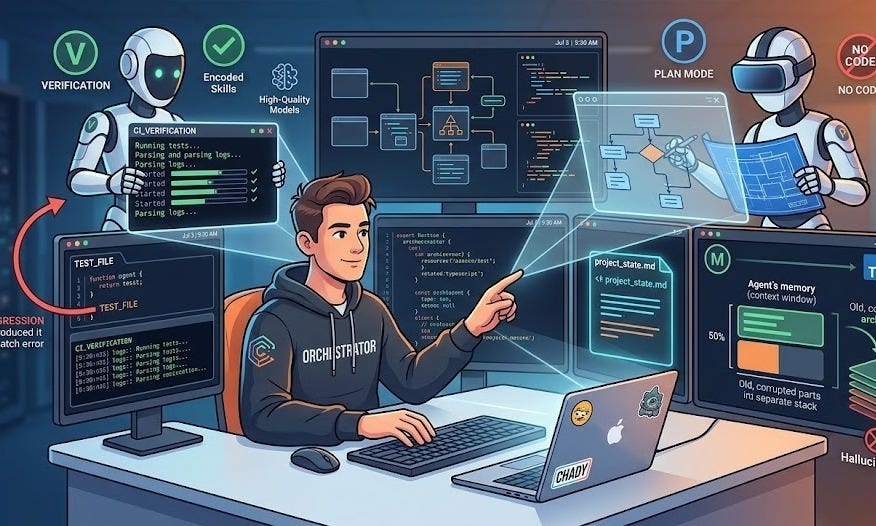

We are moving past the era of the chatbot. Today, coding agents are beginning to handle the heavy lifting of implementation, but they are only as good as the engineer directing them. Much like a musical instrument, an agent can produce 'slop' or a masterpiece; the difference lies in your technique. I’ve put together a few simple shifts to help you move from writing every line of code to orchestrating the bigger picture

Access to Verification

The single most important factor in an agent’s success is whether it has access to verification. Without it, the agent is simply “guessing” based on patterns.

Provide Tool Access: Agents need to do what humans do: run the application, view logs, and perform tests.

Tighten the Feedback Loop: When an agent can see the output of its work—such as reading logs from a CI server—the quality of its code improves substantially.

Test the Tests: Agents often write code and tests at the same time, which can lead to tests that pass “by construction”. Always ask the agent to introduce a regression to ensure the test actually catches the error.

Work in “Plan Mode”

Don’t ask an agent to do everything at once. You will get better results by separating the “thinking” from the “doing”.

The Power of Plan Mode: In this mode, a system prompt strictly forbids the agent from writing code. This allows the agent to use all its resources to understand the problem and design an architecture.

Human-Led Design: You must still do the work to break down large, messy problems into small, manageable tasks. If the scope is too big, agents may confidently produce “slop”, thousands of lines of code containing hidden bugs.

System Prompt: The background instructions that tell the AI how to behave (e.g., “do not write any code”).

Manage the “Context Window”

An AI’s “memory” is known as its context window. If this window gets too full, the AI’s performance “drops off a cliff”.

The 50% Rule: Try to keep your conversation history below 50% of the context window to maintain high accuracy.

Fresh Starts: If an agent starts going in circles or hallucinating, the context is likely “corrupted”. It is often better to close the session and start a new one.

Track State in Markdown: Keep a

.mdfile in your codebase to track project progress. This allows a new agent session to “read the file” and catch up instantly without wasting memory.

Context Window: The maximum amount of information (text and code) an AI can “remember” at one time.

Hallucination: When an AI confidently provides information that is false or incorrect.

Additional Tips for Better Results

Pick the Right Language: Agents are currently most effective with TypeScript and Go because their libraries are “source available” (the AI can read the actual code). They struggle more with the JVM (Java/Kotlin) because those libraries are often bytecode that the agent cannot read.

Use High-Quality Models: Cheaper models often waste time and tokens by spiraling or deleting code they don’t understand. Using a top-tier model often solves the problem on the first try.

Encode Skills: If you find yourself giving the same instructions repeatedly, turn them into a Skill. This is like giving the agent a permanent “how-to” guide for a specific task.

Tokens: The basic units (words or parts of words) that AI models use to process and “read” text.

Skill: A saved set of instructions that an agent can automatically use whenever it needs to perform a specific job.

Conclusion: From Code Writer to Orchestrator

The arrival of AI doesn’t minimize the need for great engineers; it changes what they focus on. In the past, value was measured by the “depth” of knowledge in a narrow niche. Today, value is shifting toward breadth.

Because the agent can handle the “depth” of implementation, the human engineer must provide the “breadth” of general knowledge. Understanding how networking, security, and architecture connect allows you to act as an orchestrator, delegating tasks while maintaining the high-level judgment that keeps the system robust.

Don’t be discouraged if your first hour with a coding agent feels clunky. It takes practice to develop the skill to use them well. Keep experimenting, keep breaking down your problems, and always give your agent a way to verify its work.